According to the latest McKinsey report (The State of AI), in 28% of organizations using artificial intelligence, the CEO personally oversees AI governance. In 17% of companies, responsibility for these matters rests with the board as a whole. Interestingly, in many cases responsibility is shared among several people – on average, two leaders are identified as responsible for AI-related projects.

Sounds like a solid organizational structure? Not necessarily.

"Two leaders responsible" often means that no one is truly fully responsible. When responsibility is shared, decisions tend to be delayed because each party waits for the other to make a move. When governance is "shared," risk becomes no one's – it's easy to assume someone else is watching for potential threats. When "the team" is supposed to defend ROI, ultimately no one stands before the board with concrete numbers in hand, ready to take responsibility for the results.

In our view, this is one of the more important – though often overlooked – reasons why most AI projects die in the pilot phase. The problem isn't always flawed technology or a lack of team competence. It can be diffused responsibility that paralyzes decision-making and prevents effective scaling of the project.

This article shows how to change that. And it needs to be addressed at the very start of the project, during the planning phase. You'll learn about four key roles that must be filled by specific individuals before your AI project moves beyond the experimental phase. You'll also learn what function each of these roles serves in the project, what their scope of responsibility should be, and how to recognize whether the roles have been properly filled.

Lack of Defined Responsibility Is One of the Key Barriers to Scaling AI

When we look at AI projects that have actually been implemented and deliver measurable business value, we usually see a common denominator: from day one, they had a clearly defined accountability structure. Everyone knew who makes decisions, who's responsible for financial results, who manages risk, and who to turn to when organizational blockers appear.

Is this an absolute requirement? If several people are simultaneously responsible for AI in your organization, is it not even worth trying to implement anything because it won't work anyway? Of course, such a categorical statement would be an exaggeration. However, it's worth being aware that diffused responsibility is a factor that significantly increases the risk of failure for the entire initiative, and certainly greatly complicates scaling the project beyond the pilot phase.

What can happen when responsibility is diffused? Most commonly, it means that every decision requires approval from all people who are in any way involved in the project. The decision-making process extends disproportionately to the importance of the issues being decided. Every risk – even small and easily manageable – becomes a reason to halt the project because no one wants to take responsibility for potential consequences. Every cost meets resistance and becomes difficult to justify in the budget because there's no person who can definitively say: "this is my decision and I'm responsible for it." And every organizational blocker, even seemingly minor ones, becomes insurmountable because no one has sufficient mandate to remove it.

Defining the accountability structure isn't boring bureaucracy or corporate formality. It's a foundation necessary for every project – including, and perhaps especially, those using artificial intelligence.

Without clearly assigned roles, AI implementation becomes an "IT project," an "innovation experiment," or a "digital initiative" – it sounds good on slides and in quarterly reports. However, such a project usually doesn't develop enough to become part of the core business and deliver real value. This happens because no one truly has it within their scope of responsibility, no one is held accountable for its results, and no one has the motivation to push it through inevitable organizational obstacles.

Four Main Roles to Fill in an AI Project

To facilitate our clients' work, we've built a simple but effective strategic tool – the AI Transformation Canvas. It helps organizations plan AI transformation in a structural and comprehensive way: from defining the business problem, through analyzing available data and selecting technology, to establishing governance and defining success metrics. You can download the Canvas by clicking the link below and use it as a starting point for planning AI projects in your organization.

At the center of the AI Transformation Canvas is the "Ownership & Accountability" area – this is where we define four key roles that must be filled by specific individuals before the AI project starts in earnest. Without completing this section, the other elements of the Canvas – even the most well-thought-out ones – won't translate into effective implementation.

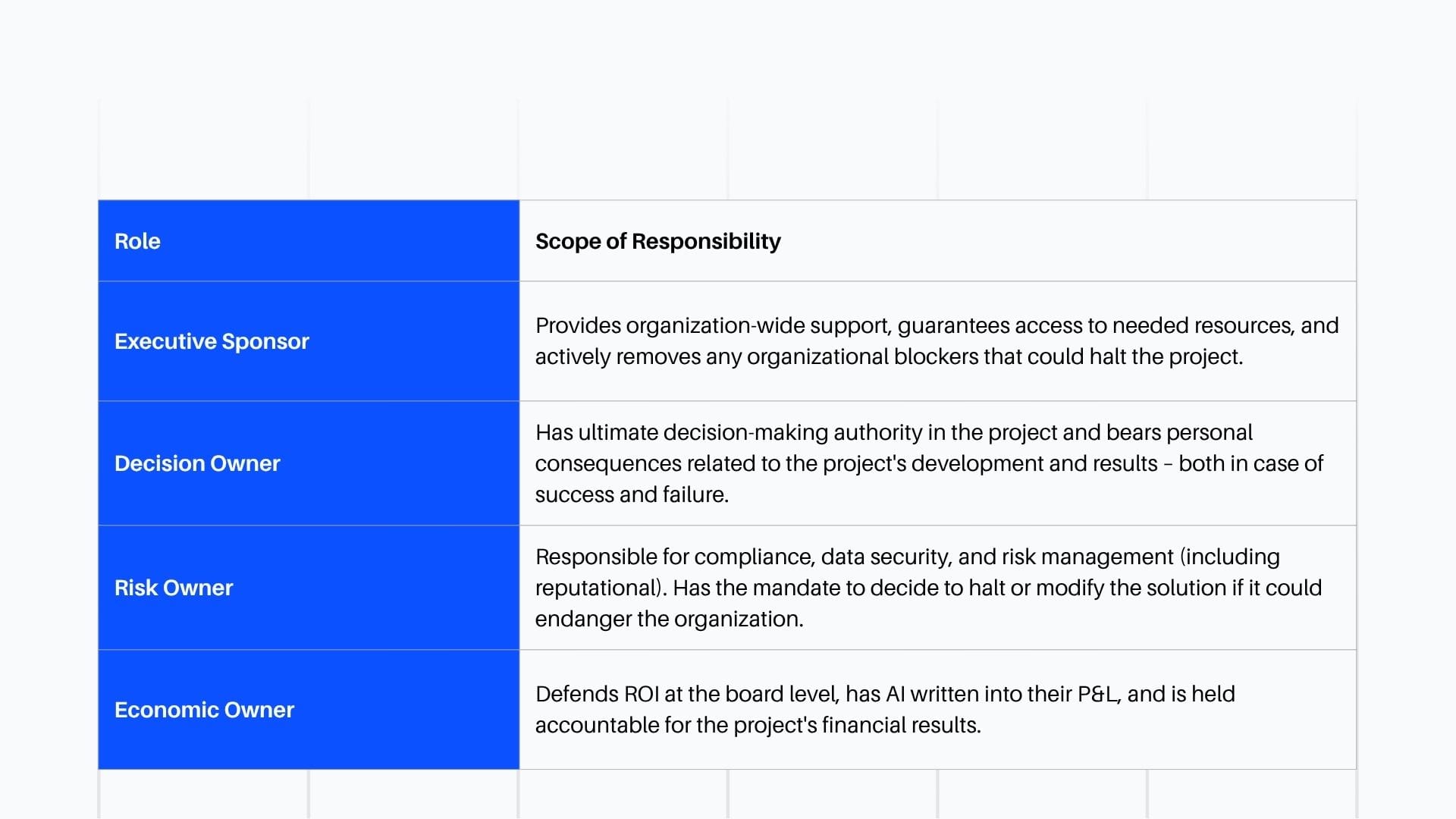

Overview of the Four Roles You Should Fill

Why are these four roles key?

Because they answer four fundamental questions that the board must ask in the context of every major project – not just AI-related ones. These questions always come up, sooner or later, and the organization must be ready to answer them:

- Who's responsible for this and who makes key decisions? → Decision Owner

- Who's funding this and who's responsible for return on investment? → Economic Owner

- Who manages risk and ensures the project doesn't harm the organization? → Risk Owner

- Who will remove organizational obstacles when the project gets stuck? → Executive Sponsor

If you can't answer these questions with specific names – your project most likely isn't ready to scale. It may work in the pilot phase, may deliver promising results under controlled conditions, but collision with organizational reality will probably stop it.

Hierarchy and Relationships Between Roles

These four roles obviously don't operate in isolation – they form a cohesive governance structure for project management. Each operates at a different level of the organization and reports differently:

- Executive Sponsor operates at the board level, providing strategic and political support. This is the person who can open doors that remain closed to others.

- Decision Owner operates at the operational level, making specific executive decisions about the project's direction. This is the person the team turns to when they need a resolution.

- Economic Owner reports to the CFO or CEO, defending the project's financial value and justifying costs incurred. This is the person who must be able to "sell" the project in the language of numbers.

- Risk Owner collaborates with legal, compliance, and security departments, managing risk in a systematic and cross-cutting manner. This is the person who sees threats that others may not notice.

Understanding these relationships is key because it helps avoid competency conflicts and ensures that every issue reaches the right person.

Executive Sponsor: Who Removes Blockers and Ensures Cross-Functional Support?

Executive Sponsor is a person at the C-suite or senior leadership level who ensures that the AI project has political support, access to resources, and appropriate priority in the organization. This role is particularly crucial in large organizations where cross-functional projects – and almost all AI projects are cross-functional – must break through organizational silos and compete for resources with other initiatives.

Main Tasks of the Executive Sponsor

- Removing organizational blockers – when the project needs resources, data, or support from another department, and that department isn't willing to cooperate, the Executive Sponsor intervenes and resolves the impasse. They have sufficient position in the organization for such interventions to be effective.

- Ensuring cross-functional collaboration – AI projects rarely fit within one department. They usually require collaboration between IT, business, legal, operations, and others. The Sponsor's task is to break down silos and build bridges between different parts of the organization.

- Protecting the project from priority shifts – in every organization, new "urgent" initiatives regularly appear that compete for attention and resources. The Executive Sponsor defends the AI project from being pushed aside and ensures it maintains its priority.

- Opening doors – provides access to key stakeholders and decision-makers who may be inaccessible to the project team. Organizes meetings that wouldn't have a chance of happening without their involvement.

This is NOT a ceremonial role. The Executive Sponsor isn't an "honorary patron" whose name is written into documentation for prestige. This is a person who actively acts when the project stalls – and who is ready to invest their time and political capital so the project can move forward.

How Does a Good Executive Sponsor Operate?

Good example: A COO who personally removes a blocker in access to operational data. When the AI team can't obtain needed data from the operations department for two weeks, the COO organizes a meeting between IT and operations within 48 hours and personally participates. After the meeting, the data is made available within a week.

Bad example: A CEO who is "sponsor" of 15 different projects and doesn't remember what this AI project specifically does. When the team asks for intervention, the response comes after three weeks and reads: "talk to the department director."

Red Flags – Warning Signs

Pay attention to the following signals that may indicate the Executive Sponsor role isn't properly filled:

- The Sponsor doesn't participate in key project meetings and isn't up to date with its progress

- The Sponsor has no real executive power in the organization – has the title but no influence

- The Sponsor is a "committee" or "board of directors" – meaning effectively no one specific

- The Sponsor is so busy with other responsibilities that the AI project is marginal to them

Qualifying Question

Before you decide that a given person will be the Executive Sponsor of your project, ask yourself one question:

Has this person ever removed an organizational blocker in any project?

If yes – take a closer look at how it looked and how long it took. If no – consider whether there really are no blockers in your organization (which is unlikely), or maybe this person simply doesn't act effectively in a role that requires active intervention (which is much more likely).

Decision Owner: Who Has Ultimate Decision-Making Authority and Bears Its Consequences?

Decision Owner is the person who has formal mandate to make the final decision on key project matters – and who will bear personal consequences if that decision turns out to be wrong or if the decision isn't made in time.

Why Is the Decision Owner Critical?

Because in many organizations, decisions are made by committees, working groups, or through informal consultations among many people. And this, in our view, is one of the more significant causes of decision paralysis in AI projects.

A committee doesn't bear responsibility – responsibility is diffused among all its members. No one is personally accountable for the outcome, so no one has sufficient motivation to make a risky decision. It's easier to "wait for more data," "consult with additional experts," or "analyze alternative scenarios" – and the project stands still.

A specific person – the Decision Owner – bears responsibility. They have a face, a name, and an annual review where the project's outcome will appear. This changes the dynamics of decision-making.

Main Tasks of the Decision Owner

- Making key decisions about the project scope, technical architecture selection, integration with existing systems, timing and method of scaling. These are decisions that determine the project's direction and chances of success.

- Accepting responsibility for consequences – if the decision turns out to be wrong, the Decision Owner is accountable. They don't hide behind the team, a committee, or external circumstances. This responsibility is the price for decision-making authority.

- Resolving disputes – when the team has different visions, when experts disagree, when conflicts of interest arise between departments, the Decision Owner makes the final decision and closes the discussion.

- Maintaining momentum – prevents "analysis paralysis," the situation where the project gets stuck in endless analyses and never moves to action. The Decision Owner knows when there's enough information to make a decision.

How Does a Good Decision Owner Operate?

Good example: A VP of Operations who decided that the AI demand forecasting system would be deployed first in two pilot regions, and then – after verifying results – scaled nationwide. They took personal responsibility for results in those regions and committed to monthly progress reports to the board.

Bad example: A "steering committee" of seven people that meets once a month and "discusses" next steps. Every meeting ends with a list of "points to clarify" and another meeting in a month. No one makes decisions because no one wants to take responsibility for them.

Difference Between Decision Owner and Executive Sponsor

These two roles are often confused, but they serve completely different functions:

- Executive Sponsor = removes organizational blockers, provides political support, opens doors. Operates "outside" the project.

- Decision Owner = makes operational decisions about the project itself, bears consequences for the outcome. Operates "inside" the project.

These can be different people – and often should be, especially in large organizations. The Executive Sponsor may not have the time or competence to make detailed operational decisions. Meanwhile, the Decision Owner may not have sufficient position to remove blockers at the organization-wide level.

However, in smaller organizations or in smaller-scale projects, the same person may fulfill both roles. This is acceptable as long as the scope of responsibility for both roles is clear and properly executed. It's important that this person is aware of when they're acting as Sponsor (removing a blocker) versus when they're acting as Decision Owner (making an operational decision).

Qualifying Question

Before you conclude that your project has a Decision Owner, honestly answer one question:

If the AI project stalls due to lack of key decisions – who specifically will bear consequences in their annual review?

If the answer is "the whole team," "hard to say," or "it depends on the reasons for failure" – you don't have a Decision Owner. You have a group of people who are involved in the project, but none of them are responsible for it.

Risk Owner: Who's Responsible for Compliance, Security, and Reputation?

Projects using artificial intelligence involve new types of risk that don't occur – or occur to a much lesser extent – in traditional IT projects. We're talking about reputational risk (what if AI says something offensive?), regulatory risk (how to ensure compliance with GDPR and AI Act?), ethical risk (are our models discriminating?), and operational risk (what if the model stops working correctly?).

Risk Owner is the person responsible for ensuring the AI project doesn't harm the organization – either in the short or long term.

Main Tasks of the Risk Owner

- Managing compliance risk – ensuring compliance with GDPR, AI Act, industry regulations (e.g., in the financial or medical sector), and the organization's internal policies. This isn't just a one-time analysis but continuous monitoring.

- Protection against reputational risk – what happens if AI makes a controversial decision that ends up in the media? What if it gives a customer false information? The Risk Owner must anticipate such scenarios and prepare the organization to handle them.

- Data and model security – protection against data leakage, attacks on the model (adversarial attacks), model quality degradation over time (model drift). These are technical risks that require collaboration with IT security teams.

- Auditability and transparency – can the organization explain why AI made a specific decision? This is increasingly important in the regulatory context, but also in terms of customer and employee trust.

Why Is the Risk Owner Not Just "Legal & Compliance"?

It's tempting to assign the Risk Owner role to the legal or compliance department. After all, they deal with risk, right? Not entirely.

Risk in AI projects isn't just legal risk. It's also:

- Business risk – what if the model stops working correctly and customers lose trust in it? What if competitors exploit our mistakes?

- Technical risk – what if the model starts hallucinating? What if the model provider's API stops responding for an hour? What if changing to a newer model causes degradation in system performance? What if the data we use in the process turns out to be insufficient or incorrect?

- Operational risk – what if AI does something that paralyzes a key business process?

The Risk Owner must think holistically – see the full picture of risk, not just its legal slice. They must collaborate with legal, IT security, operations, PR, and other departments, integrating their perspectives into a coherent risk management strategy.

How Does a Good Risk Owner Operate?

Good example: A Chief Risk Officer who personally reviews the model testing plan in the context of edge cases and failure scenarios. Collaborates with the legal department on documenting decisions made by AI so the organization can explain them in case of an audit or complaint. Establishes specific procedures with operations for handling anomalies in model behavior. Ensures proper system "observability" (logging system, such as Langfuse) and its evaluation (periodic tests on benchmark data + quality assessment).

Bad example: A "compliance team" without a specific person responsible, who "will monitor the situation" and "prepare recommendations if problems arise." No one is personally responsible, no one takes proactive action, and problems are only addressed after the fact – if at all.

Qualifying Question

Answer one question:

If AI makes a mistake that leads to financial loss or a reputational crisis – who will have to stand before the board and explain what went wrong and what remedial steps have been taken?

If you don't know a specific name – you don't have a Risk Owner. At best, you have hope that nothing bad will happen.

Economic Owner: Who Defends ROI and Has AI in Their P&L?

Economic Owner is the person who has the AI project written into their budget (P&L) and who must defend the return on investment at the board level. This role forces treating AI not as a technological experiment but as a business investment with specific financial expectations.

Why Is the Economic Owner Key?

Because AI costs money. Infrastructure, data, talent, integration with existing systems, maintenance and development – all of this generates costs, often significant ones. And if no one has these costs in their P&L, the project quickly becomes an "IT cost" or "innovation investment" that never demonstrates real ROI.

The Economic Owner fundamentally changes how people think about the project. The question stops being "is AI interesting?" or "are we innovative?" and becomes "does AI pay off?" and "when will we see return on investment?" These are brutal but necessary questions if the project is to survive the next round of budget cuts.

Main Tasks of the Economic Owner

- Defining the project's financial model – how much the project costs (one-time and ongoing), how much value it will generate (savings, additional revenue, avoided costs), when it will pay off. This must be calculated and documented, not based on general promises.

- Defending ROI before the board – explaining the project's business value in financial language that the CFO and CEO understand. Not in technological language ("we have the latest LLM model"), but in business language ("we reduced customer service costs by 15%").

- Monitoring return on investment – regularly tracking costs vs. value generated over time. Are we on track to achieve the assumed ROI? Have unexpected costs appeared? Is value materializing according to plan?

- Deciding on scaling or withdrawal – if the project isn't delivering value and has no real chance of improvement, the Economic Owner makes the difficult decision to close it. This is painful but better than continuing a project that only generates costs.

How Does a Good Economic Owner Operate?

Good example: A VP of Finance who has the AI project written into their P&L and is held accountable for it. Regularly reports ROI to the board using specific numbers. Can show how much AI reduced operational costs or increased revenue compared to a scenario without AI. When results are worse than assumptions, initiates discussion about causes and possible corrections.

Bad example: "The CTO is responsible for project finances" – but the AI project isn't part of their budget, so they don't bear real financial consequences. Costs are "somewhere" in the IT budget, and business value is "difficult to measure." No one can say whether the project pays off.

Difference Between Economic Owner and Decision Owner

These roles address different dimensions of project success:

- Decision Owner = responsible for operational success. Does the project work? Does it achieve its functional goals? Is it implemented according to plan?

- Economic Owner = responsible for financial success. Does the project pay off? Does it deliver value greater than costs incurred?

Most often these are different people because they require different competencies and perspectives. The Decision Owner thinks in terms of "does this work and does it solve the problem," the Economic Owner thinks in terms of "does this pay off and is it worth continuing the investment." Both perspectives are needed.

Of course, it may happen that the same person fulfills both roles – especially when the project is smaller or when the organization is less complex. However, it's important that this person consciously switches between both perspectives.

Qualifying Question

Answer honestly one question:

Who will have to explain to the CFO why the AI project didn't pay off in the expected timeframe?

If the answer is "not applicable, this is an innovation project" or "hard to say because we don't have a defined ROI" – you don't have an Economic Owner. And you probably don't have a plan for how to measure your project's business value – which means the project is particularly vulnerable to budget cuts.

How to Use AI Transformation Canvas in Practice: The Role Assignment Stage

You already have the AI Transformation Canvas. You have basic information about each of the four key roles. Now it's time to move from theory to practice and actually fill these roles in your project.

Step 1: Complete the "Ownership & Accountability" Section in AI Transformation Canvas

Take the Canvas and find the section on ownership. Ask yourself four questions – and for each role, write a specific name, not a position or department:

- Executive Sponsor: Who will remove organizational blockers and ensure cross-functional support when the project gets stuck?

- Decision Owner: Who has ultimate decision-making authority on key matters and bears personal consequences for the project's results?

- Risk Owner: Who's responsible for compliance, security, and reputational risk? Who will stand before the board if something goes wrong?

- Economic Owner: Who has AI in their P&L and will defend ROI at the board level?

If you can't write a specific name for any role – that's a signal that you must first solve this problem before the project moves forward.

Step 2: Do a "What If" Test

Just writing names isn't everything. You need to verify that these people will actually be able to fulfill their roles when needed. The best way is to conduct a simple scenario test.

Below you'll find example scenarios you can use. Of course, it's worth finding more – the more scenarios you analyze "dry run," the better prepared your governance structure will be for real challenges.

Scenario 1: The AI project needs access to data from another department, but that department has its own priorities and isn't willing to cooperate. The director of that department claims they "don't have resources" to prepare the data. What happens?

→ The Executive Sponsor should intervene and resolve the impasse. Who specifically will do this? How quickly? What tools do they have?

Scenario 2: The AI model gives unexpected results – significantly different from what you assumed. The team doesn't know whether to continue the current approach, change the model, or perhaps return to the data analysis stage. Expert opinions are divided. What happens?

→ The Decision Owner should make a decision and end the discussion. Who specifically will do this? On what basis? How quickly?

Scenario 3: A potential GDPR compliance risk emerges – someone noticed that input data may contain information that shouldn't be processed this way. The team isn't sure whether this is a real problem or a false alarm. What happens?

→ The Risk Owner should assess the risk and decide on remedial measures. Who specifically will do this? What competencies do they have? Who will they consult with?

Scenario 4: Six months after project start, the board asks whether AI is paying off. The CFO wants to see specific numbers. What happens?

→ The Economic Owner should present ROI with specific data. Who specifically will stand before the board? What numbers will they present? Where will they get them from?

If you can't answer these questions with a specific name and describe a specific way of acting – the roles aren't well filled. You have names on paper, but you don't have a functioning governance structure.

Step 3: Formalize the Team

Project success largely depends on whether you build alignment with key people from day one. Among these people, definitely in first place are precisely these four roles you've just established. But "verbal agreement" to join the project isn't enough.

You must formalize these people's involvement in the project. This means clearly establishing and documenting:

- Who fills which role – not "who's involved," but who has specific responsibility

- What authority and scope of responsibility they have – what this person can decide independently, and what requires escalation

- How often they report and in what form – whether it's weekly meetings, monthly reports, or ad hoc as needed

- Who they report to – what's the escalation path when problems arise

As a result, you should have a document or slide in a presentation that every project participant understands and accepts. This isn't about bureaucracy for its own sake – it's about everyone having clarity about the accountability structure.

Equally important: the scope of responsibility for each of these people should be clear and officially written into their job duties. A scenario where someone agreed "on the side" to help you with this project alongside their regular tasks is definitely not good. When priority conflicts arise (and they will), such a person will always choose their "official" duties at the expense of the AI project.

FAQ: Most Common Questions About AI Governance Roles

Can the same people fill more than one role?

It depends on the organizational context.

In small organizations or in smaller-scale projects – yes, they often must. It doesn't make sense to engage C-suite people for a pilot project. But even if one person fills several roles, always document which role they're acting in at any given moment. This helps avoid misunderstandings and conflicts of interest.

Most risky combinations:

- Decision Owner + Risk Owner – this is a potential conflict of interest. The person responsible for project results may tend to underestimate risk so the project can move forward. The Risk Owner should be able to say "stop" even if the Decision Owner wants to continue.

- Economic Owner + Decision Owner – risk that decisions will be made solely from the perspective of short-term ROI, ignoring other important factors. Pressure to demonstrate quick returns can lead to bad operational decisions.

Beneficial combination:

- Executive Sponsor + Economic Owner – the project can benefit if a person at the COO or CFO level takes full responsibility both financially and organizationally. Such a person has both the resources and motivation for the project to succeed.

What If We Don't Have Someone at the C-Suite Level Who Could Be Executive Sponsor?

This is a red flag. If no one from the highest management level wants or can engage as Executive Sponsor, it's a sign that the AI project isn't treated as a strategic priority. And this significantly reduces its chances of success.

Alternative: The Executive Sponsor can be a VP or Director with sufficient mandate – but this person must have real executive power in the organization and direct access to the board. If they have to go through three levels of hierarchy to get anything done, they won't be an effective Sponsor.

Are These Roles Needed in Every AI Project?

It depends on the project's scale and stage of development.

- Pilot phase / proof of concept: You can skip full formalization, but you should know who de facto makes decisions and who's responsible for results. Even informally – you must have answers to the four fundamental questions.

- Scaling (impact on core business): Yes, these roles are absolutely needed. Without them, the project may not break through organizational blockers, may not obtain needed resources, and may be stopped at the first difficulties. The larger the project, the more formal the governance structure must be.

Who Should Fill These Roles?

Usually the project lead or person responsible for the AI initiative does this. But they can't do it alone – they must have agreement from the people they're engaging in the project.

Moreover, informal agreement isn't enough. This must be a decision officially writing these roles (in this specific project) into the scope of responsibility of the people involved. This means a conversation with these people's supervisors, updating annual goals, including the project in job responsibilities. Without this formalization, roles will remain on paper, and in practice no one will feel truly responsible.

Summary: From Planning to Action

In summary: AI projects rarely fail due to insufficient technology. The cause of failure is often a lack of defined scope of responsibility in the project and thus a lack of decision-making authority and proper organizational management.

AI Transformation Canvas is a tool that will help you solve this problem while still in the project planning phase – before these problems actually occur.

The Canvas imposes a structure that forces answers to four fundamental questions:

- Who has ultimate decision-making authority? → Decision Owner

- Who's responsible for return on investment? → Economic Owner

- Who manages risk? → Risk Owner

- Who will remove organizational obstacles? → Executive Sponsor

If you can't answer these questions with specific names – in our view, the project isn't ready for scaling. It may work in the pilot phase, may even deliver promising results, but collision with organizational reality will probably stop it.

Need Support?

If you'd like to discuss the AI Transformation Canvas in the context of your project and consult ideas with an experienced AI team, we'd be happy to help.